|

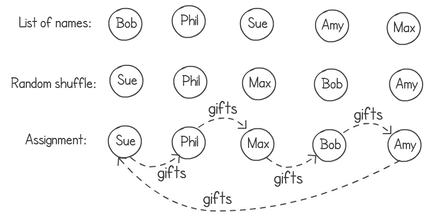

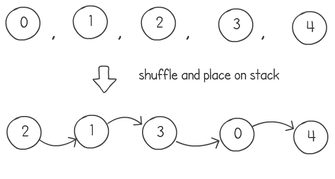

A few months ago I wrote about an algorithm to produce a random shuffle of an array. The idea was to use this algorithm for the Secret Santa game, where you have to buy a small gift for another team member. The following is a diagram illustrating how it would work in two steps. First we shuffle the list of names in an array, then we connect each name with the next, with the last name to the first. In this diagram Sue would buy a gift for Phil, Phil for Max and so on until we get to the end and Amy buys for Sue.

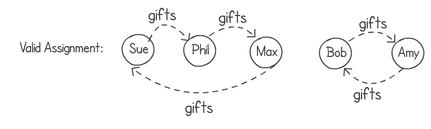

A friend of mine, after reading this "not a blog", pointed out that this did not produce all of the possible random combinations. While the idea of shuffling the list of names and then chaining them together in a ring works, it would always produce a random one-cycle ring. I have to point out that my friend, Jean Paul (https://degabriele.info/), researcher in cryptography, knows a thing or two about randomisation. He’s right of course. The following example would not be possible in my solution.

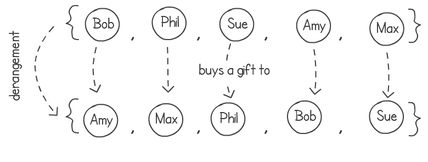

Turns out that my Secret Santa problem is actually a re-encoding of the random derangement problem in computer science. It’s quite an interesting one. The idea is to shuffle a list of elements in a way that no single item remains in the same position after the shuffling. Think about an array containing a bunch of names. After we shuffle them around, no name should be in the same place. We can then map the starting positions of each name to the finishing ones, showing who's buying a gift for whom. The next diagram shows a derangement that results in creating the two cycles shown in the previous example.

To be more precise a derangement is a permutation of elements in a set, in a way that no element appears in its original position. There is a ton of maths theory around this. For example if we just do a normal random shuffle on a list of items, the probability of having a derangement is 1/e or approximately 0.3679. The proof and theory can be found here:

https://en.wikipedia.org/wiki/Derangement

We can easily implement an algorithm that keeps on shuffling until we have a derangement. Let’s do it three steps. First let’s write something that generates a list of sequential shuffled numbers up to n-1 . For example for n = 5, we can generate 4, 1, 3, 0, 2. We can later use this sequence to swap elements in the list we want to shuffle. For example if we have the list with ["Bob", "Phil", "Sue", "Amy", "Max"] , shuffling with the sequence 4, 1, 3, 0, 2 would result with a final list of ["Max", "Phil", "Amy", "Bob", "Sue"]. Here’s the python code to generate this shuffled index. This is simply Knuth’s shuffle algorithm on a list of sequential numbers. Notice how python is one of the few languages where you can swap two elements in an array in a single line! Ah, the little joys in life...

Code Editor

The next step is to check if the generated list is a derangement or not. To do this we can simply iterate over every single item in the sequence and check if the value is the same as its index. If it is for any of the elements, then the list is not a derangement. We can easily do this with a simple ‘for loop’.

Click here to edit.

Finally we can put the two together. We can keep on generating shuffled sequences while it’s not a derangement and then swap the items in our original list using the derangement.

Click here to edit.

There are of course many better ways to implement this derangement algorithm. I'm putting a simple implementation here so it can be easily understood. This presentation outlines many other performance improvements:

https://www.cs.upc.edu/~conrado/research/talks/analco08.pdf

In our algorithm we “guess” a derangement sequence and if it’s not a valid one, we throw it away. After implementing the above code, it got me wondering, is there an algorithm that doesn’t keep on trying until it finds a valid derangement? I.e. can we have one with a measurable worst time complexity?

If we have a way to enumerate derangements, we can then generate a random number n and simply choose the n’th derangement. But how do we enumerate derangements? Maybe a good place to start is to try generating permutations. Let’s say we a have a sequence such as 0,1,2,3 … n, how do we write some code that generates all permutations of this sequence? There is a simple way to do this. It’s called the “heap algorithm”. You can find all the details about it here, implementation follows in python: http://ruslanledesma.com/2016/06/17/why-does-heap-work.html

Click here to edit.

Next we can simply ignore anything that is not a derangement. We can re-use our is_derangement() function for this. If we modify the previous program by calling this function we’ll end up with something that lists all possible derangements.

Click here to edit.

Now we just need to return the n'th derangement. This is kind of where it gets complicated with the implementation. We need to modify our function to accumulate the number of derangements generated and the n’th derangement itself. In my implementation changing the function so it returns a tuple. I’m pretty sure there is a cleaner way to do this.

Click here to edit.

Before we modify our main function to choose a random nth derangement, we need to know the upper limit of the number of derangements of the sequence. That is, given n, find the total number of derangements of a set of n elements. Wikipedia tells us that this is the following recursive expression:

countDerangments(n) = (n - 1) * (countDerangments(n - 1) + countDerangments(n - 2)) So we can implement the following function to give us the upper limit (go dynamic programming!):

Click here to edit.

Finally we can put everything together. First we select a random number between 0 and the total number of derangements. Then we use the previously developed function to get the nth number of derangements.

Click here to edit.

Right you’re probably thinking… “This is horrible” and you’re right. In fact I think this is my first time ever writing an algorithm with a O(n!) run-time complexity. The algorithm is pretty useless even for small values of n. Trying it with anything bigger than n=10 will have you waiting for a couple of lifetimes. But hey, sometimes we do things just because we can, not because it’s a good idea.

A colleague of mine, Bruno, <link to git profile> came up with a brilliant idea to find a random derangement without throwing away guesses, with a fast run-time complexity. I’m going to try to explain it in a few steps using a sequence of numbers of length 4. As usual we start with a sequence 0,1,2 ... n. The first step is to copy this list, use a normal Knuth shuffle and put the results on a stack:

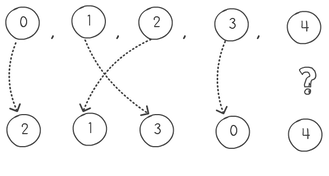

Next is to pair the initial sequence with the item of the stack. Pop one item from the stack, pair it and move to the next. If the items are equal, you pop another one pair it, and push the previous one back on the stack. In our example this produces the following pairing, meaning that 0 will go to position index 2, 1 goes to 3, 2 to 1 and 3 to zero. But we have a problem when we come to process the number 4. Basically after we pop 4, our stack is empty and we cannot pop another item.

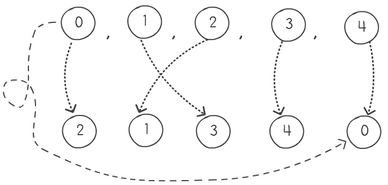

So what do we do? Easy; we always put the first item in our sequence as the bottom item in the stack. This way the last item will never pair with the same value.

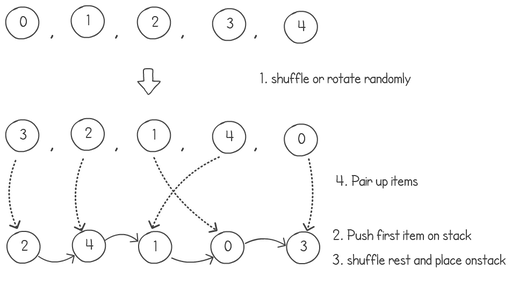

But I know what you're thinking... "The last item will always pair with the first item, it's not exactly random!". And you're right. The way to fix this is to do another shuffle on the first sequence or array-rotate it a random number of times. This way the first item on the list will always be randomly selected to match with a random selection at the end. Here are the 3 steps together.

The above example would have number 3 move to index position 2, 2 would go to 4 and so on. This gives us the derangement of [1,4,3,0,2]. Here is the complete python code of Bruno's random derangement algorithm:

Click here to edit.

Pretty neat eh? Follow my colleague Bruno on git: https://github.com/brunosousarb And check out Jean Paul's research website, there a loads of great papers on it: https://degabriele.info/

0 Comments

|

AuthorJames Cutajar is a software developer, with interests in high performance computing, algorithms design and distributed data structures. Archives

April 2020

|